If you are like most business leaders I talk to in Central Texas, your team is already experimenting with AI.

If you are like most business leaders I talk to in Central Texas, your team is already experimenting with AI.

Someone just turned on Microsoft 365 Copilot.

Your marketing agency is testing AI tools to write email campaigns.

Maybe your sales team is dabbling with an AI-powered CRM or chatbot.

AI feels like the next big productivity wave—because it is.

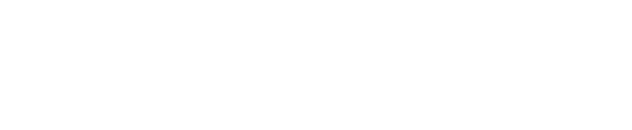

But a recent Microsoft disclosure about Copilot quietly reading emails labeled “confidential” is a good reminder of the part nobody wants to talk about.

Let’s unpack what happened, why it matters for your business, and how you can move forward with AI on purpose, not by trial and error.

The Incident: When “Confidential” Wasn’t Quite Confidential

In February 2026, Microsoft acknowledged a bug in Microsoft 365 Copilot Chat. In certain scenarios, Copilot could access and summarize emails that were labeled as confidential—even when data loss prevention (DLP) rules were supposed to keep that content more tightly controlled.

The good news: this did not mean those emails were suddenly visible to the entire company or the outside world.

The bad news: it showed how easy it is for AI tools to cross lines that leaders assumed were clearly drawn.

If an AI assistant can reach into a “Confidential” folder and pull details into a summary, what else could it be learning from your environment?

- Executives’ email threads about a potential acquisition

- HR conversations about sensitive personnel matters

- Client documents and contracts covered by NDAs

When you zoom out, that is not just a “Microsoft bug.”

It is a wake-up call for how all of our AI tools interact with the systems that hold our most important information.

The Real Problem: AI Adoption Without a Plan

Across the country, roughly 4 in 5 small businesses are expected to be using AI marketing tools by the end of this year. Add in productivity tools like Copilot, AI features in CRMs, chatbots on your website, and “smart” line-of-business apps, and the picture becomes clear:

- More AI

- Touching more systems

- With more access to more data

From the outside, it looks like innovation.

On the inside, many leaders are quietly thinking:

- “I do not want to fall behind on AI.”

- “I also cannot afford a headline about us mishandling client or employee data.”

You should not have to choose between the two.

The problem is not AI itself. The problem is AI adoption without a plan—turning tools on because they are exciting or easy, without first asking, “What should this tool be allowed to see?”

The Guide: A Local Partner Who Lives in Microsoft 365 and Security

This is where a trusted IT partner earns their keep.

At CTTS, we work every day with Central Texas organizations that run on Microsoft 365, line-of-business apps, and a growing stack of AI-powered tools. We see the good side of AI—faster reports, better insights, smoother communication—and we see where things can go sideways.

Our job is to help you:

- Use AI confidently, so your team is not stuck in the past.

- Protect sensitive data, so you can look clients, employees, and regulators in the eye.

You do not need another vendor pushing you toward “the next big thing.” You need a guide who understands your business, your risk tolerance, and the systems you already own.

The Plan: Turn On AI With Guardrails, Not Guesswork

Here is a simple, practical path we walk through with our clients.

1. AI Readiness & Risk Review

We start by asking three basic questions:

- How is your team using AI today (officially and unofficially)?

- Where does your sensitive data live—email, SharePoint, Teams, CRM, finance systems, HR tools?

- Which AI-connected tools have access to that data right now?

You cannot protect what you do not see. This step often surfaces “shadow AI”—tools that individual departments turned on with good intentions, but without IT oversight.

2. Lock In Guardrails

Next, we put sensible boundaries in place so AI can help without oversharing:

- Tightening permissions and access to sensitive mailboxes, Teams, and document libraries

- Reviewing and refining sensitivity labels and DLP policies in Microsoft 365

- Deciding where features like AI watermarking and activity logging make sense

- Documenting simple, human-readable usage guidelines so your team knows what not to feed AI tools

The goal is not to slow everything down. The goal is to make sure the right people—and the right tools—are seeing the right information for the right reasons.

3. Ongoing Check-Ins as AI Evolves

AI features are changing monthly. That means your guardrails cannot be a one-time project.

We set up a simple rhythm—often quarterly—to:

- Review new AI capabilities in tools you already own (like Microsoft 365)

- Revisit permissions and policies as your team shifts how they work

- Add new, safe AI use cases that actually move the needle for your business

Over time, you get a culture where AI is part of the way you work—but on your terms.

What Happens If You Ignore This?

Most organizations do not feel the pain right away.

AI features get turned on.

People move faster.

Nothing explodes.

Until one day, something does.

A confidential email thread gets surfaced in the wrong context.

A chatbot shares more customer detail than it should.

An employee pastes sensitive data into an AI tool that is not covered by your agreements.

Now you are dealing with:

- Embarrassing conversations with clients or employees

- Hours of investigation and cleanup

- Potential legal and regulatory exposure

- A leadership team asking, “How did we let this happen?”

All of which could have been avoided with a plan measured in days—not months.

A Better Story: Confident AI, Protected Data

Imagine a different path for your organization:

- Your team uses Copilot and other AI tools every day to draft, summarize, and analyze.

- You know which systems they can and cannot touch.

- You have clear documentation of your controls and policies.

- When new AI features roll out, you evaluate them calmly instead of reacting in a panic.

That is not wishful thinking. It is what we are building with businesses across Central Texas right now.

Ready for an “AI Readiness” Conversation?

If you are a business leader in Central Texas and any part of this hits close to home, now is the right time to act.

You do not have to become an AI expert.

You just need a partner who understands both the technology and the responsibility that comes with it.

Reach out and ask for an AI readiness and risk review. We will look at how you are using tools like Microsoft 365 Copilot and your other AI platforms today, where your sensitive data lives, and what needs to be tightened up.

From there, we will give you a clear, practical path so you can move fast with AI—and protect the people who trust you with their information.

Frequently Asked Questions

1. Can AI tools like Microsoft 365 Copilot read confidential emails?

AI tools such as Microsoft 365 Copilot can analyze and summarize information that users already have permission to access within the Microsoft 365 environment. In early 2026, Microsoft disclosed a bug that allowed Copilot Chat to summarize emails labeled “confidential” in certain scenarios, even when data loss prevention rules were intended to limit that access.

While the emails were not exposed to the entire company or outside parties, the situation highlighted how AI tools can interact with sensitive data in ways organizations may not fully expect.

2. What risks do businesses face when adopting AI without a plan?

When organizations enable AI tools without clear policies and safeguards, those tools may gain access to sensitive information stored across systems like email, SharePoint, Teams, HR platforms, or CRM applications.

This can lead to accidental exposure of confidential communications, client records, employee information, or proprietary documents. Without guardrails, businesses may face reputational damage, regulatory concerns, and time consuming investigations if sensitive data is surfaced in the wrong context.

3. How can businesses safely adopt AI tools like Copilot?

Businesses can safely adopt AI by first conducting an AI readiness and risk review. This process typically includes identifying how employees are currently using AI tools, locating where sensitive data lives across the organization, and reviewing which systems AI tools can access.

From there, companies can implement guardrails such as refined permissions, sensitivity labels, data loss prevention policies, and clear guidelines for employees on how to use AI responsibly. Regular reviews ensure those safeguards keep pace as AI capabilities evolve.

Contact CTTS today for IT support and managed services in Austin, TX. Let us handle your IT so you can focus on growing your business. Visit CTTSonline.com or call us at (512) 388-5559 to get started!